What Is MCP — the USB-C for AI?

The Model Context Protocol (MCP) is an open standard that lets AI applications talk to external data and tools in a predictable, secure way — much like USB‑C for devices: one connector, many use cases. Initiated by Anthropic and quickly embraced by OpenAI, Google, and the wider community, MCP connects language models not only to “chat,” but to real systems: databases, APIs, file systems — and document platforms.

Adoption is not niche: ecosystems report 1000+ community servers and integrations across desktop clients, IDEs, and assistants. For enterprises, that means fewer one-off connectors: a reusable layer you can audit, version, and run with explicit permissions.

Why Enterprise AI Needs a Protocol

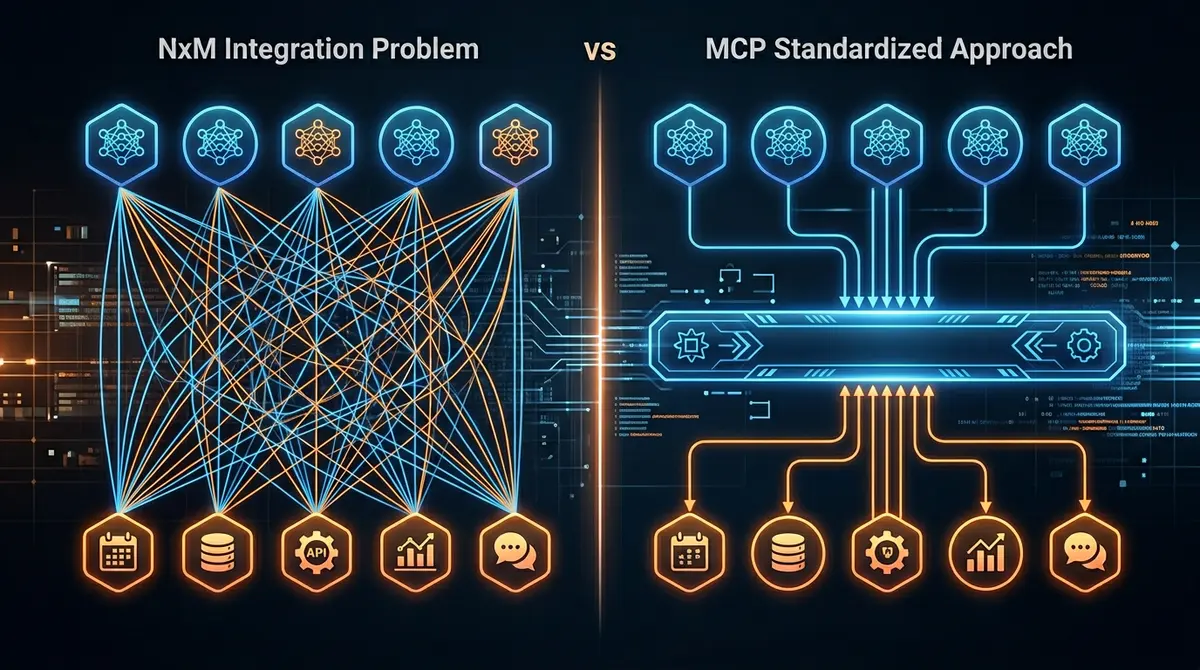

Without a shared norm, the classic N×M problem appears: N AI clients meet M backends — and every team reinvents adapters, secrets, and error semantics. Prompts become fragile because they implicitly encode knowledge of internal URLs, JSON shapes, and edge cases. At the same time, context limits bite: documents, metadata, and tool outputs must be moved deliberately, not by stuffing everything into the window.

A protocol like MCP addresses these structural issues: discoverable tools, typed inputs/outputs, clear transport semantics — and less glue code to rewrite on every model change.

“MCP is not a substitute for governance — it is the standard plug under which governance can scale.”

How MCP Works: Client, Server, Tools

Architecturally, MCP separates concerns cleanly: an MCP host (e.g., an AI client or IDE) runs MCP clients that speak to MCP servers over STDIO, HTTP, or WebSockets. Servers expose tools (functions), resources (readable context), and optionally prompts — the model chooses suitable operations via the client.

Compared to older integration styles, this is a deliberate middle ground: not monolithic, not a patchwork of ad-hoc REST calls.

| Dimension | REST API (classic) | RAG (retrieval) | MCP |

|---|---|---|---|

| Primary focus | CRUD & business functions | Context from knowledge bases | Tool & context orchestration for AI |

| Context binding | caller assembles context | embeddings + search | resources + structured tool outputs |

| Discoverability | OpenAPI/docs (manual) | indexes/pipelines | capability handshake, server metadata |

| Fit for LLM agents | medium (many custom adapters) | high for “fetch knowledge” | high for “act + contextualize” |

| Typical weakness | chatty integration, fragmentation | hallucination risk with bad sources | policy & governance required |

MCP in Document Processing

In practice, Claude Desktop, ChatGPT (with connectors), or Cursor can — via MCP — reach your document pipeline: classification, extraction, quality checks, handoff to ERP or archive. Instead of screenshots or copy-paste, you run operations that can be logged end-to-end.

For Document AI, that is a leap from “text in a window” to tool-driven processing: the model stays the router; execution stays atomic on the platform.

PaperOffice as an MCP Server: 443+ Tools for Any AI

PaperOffice AI provides an MCP server that exposes a broad toolkit of 443+ atomic tools — from OCR and AI-IDP to integration, security, and vertical scenarios. Tools are maintained as a single source of truth in the database; MCP enables auto-discovery, so clients load capabilities dynamically instead of hardcoding endpoint lists.

Permissions and org scopes remain enterprise-grade: what the model may call is decided by your policy — not an undocumented side channel.

From Document Inference to Architectural Reasoning

We are moving from AI that “reads a document” to AI that tackles architecture and system questions: which pipeline, which data quality bar, which compliance chain, which integration is correct? MCP is the bridge so these questions become operational — with explicit tool calls and reproducible outcomes, not just rhetoric.

“Security does not end at the protocol: it is decided in scopes, reviews, and operations — not in the model prompt alone.”

Risks and Limits of MCP

Protocols are not magic. Prompt injection, overly powerful tools, and weak governance remain risks — MCP shapes the surface, it does not replace policy. Ecosystem maturity varies; not every server is production-ready. Still, transparency, scoping, and auditability are easier when the interface is standardized.

Conclusion: MCP-First Is the New API-First

If you integrate today, you think API-first — tomorrow’s advantage is MCP-first: the same atomic capability, but directly for AI clients with less integration friction. For Document AI, this is the consistent next step: models route, tools execute — with MCP as the lingua franca between your document platform and the AI ecosystem.